How to use Detectron2 for Rotated Bounding Box Detection on a Custom Dataset?

Going from aligned to rotated bounding boxes is conceptually simple, but technically not so much.

Model families such as YOLOv5 and Detectron2 only offer axis-aligned bounding boxes out-of-the-box. What if the boxes should be rotated? (Image Source: Clem Onojeghuo from Pexels)

The vast majority of today’s computer vision and object detection revolves around detecting bounding boxes. There are multiple out-of-the-box to achieve this such as YOLOv5 or Meta’s Detectron2. There’s a catch, however: the vanilla versions of these solutions only offer detection of axis-aligned bounding boxes. What if the objects that you want to detect are rotated?

In this example, we’ll use images of ships as an example. As you can see from the image below, the ships to detect are not aligned with the horizontal/vertical axes of the image. Therefore being able to detect them using rotated bounding boxes is desired.

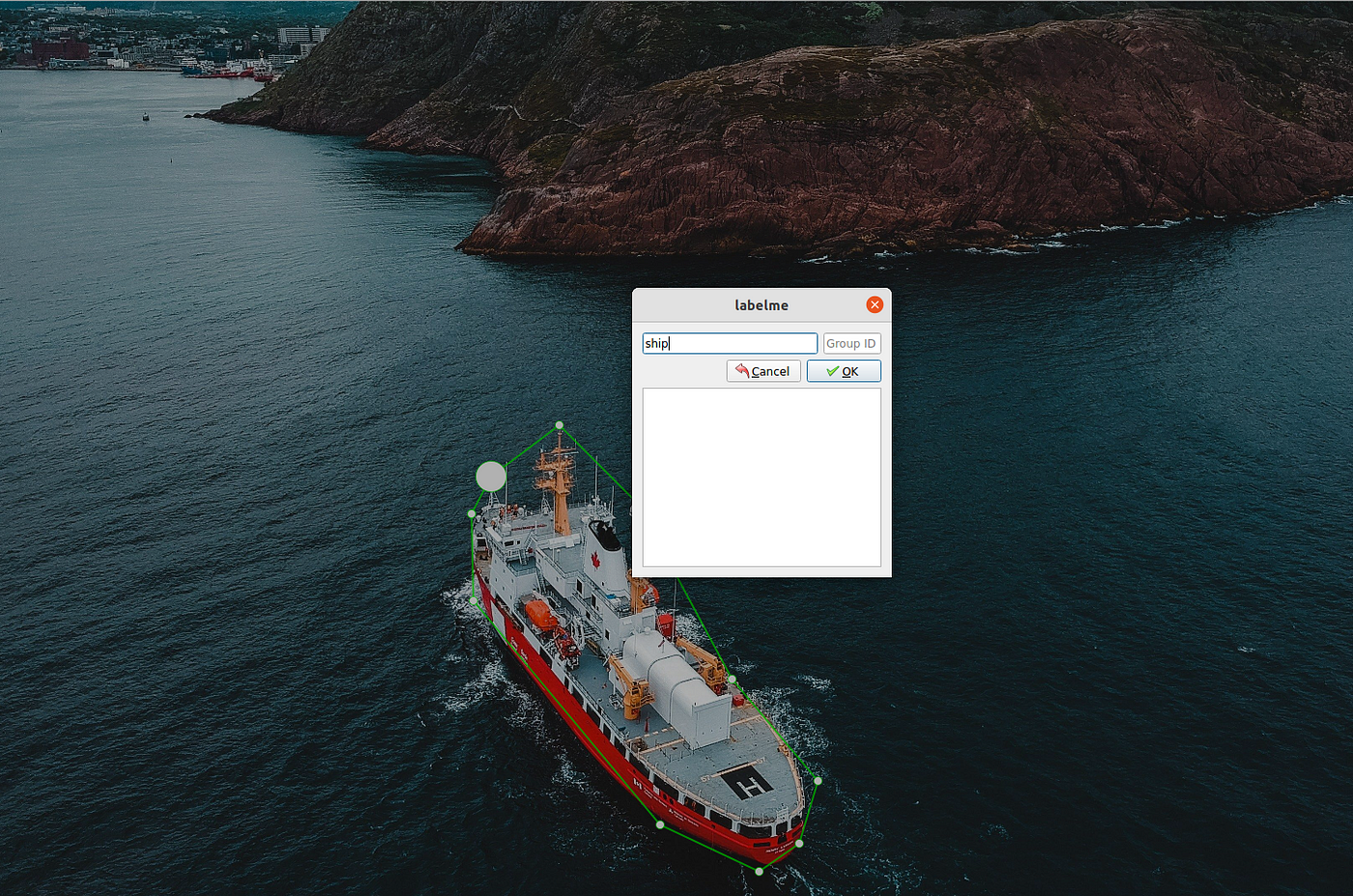

We are using labelme (https://github.com/wkentaro/labelme) polygon annotation to label the ship in the image. (Image Source: Erik Mclean from Pexels)

The rest of the tutorial will show you:

- How to produce rotated bounding box labels using labelme for custom datasets

- How to configure and set up training for rotated bounding box detection using Detectron2

- How to visualize predictions on unseen images

Producing rotated bounding box labels for custom datasets

A great example of a detection task that requires rotated bounding boxes is detecting various elements in aerial or satellite images. Ready-made labeled datasets such as DOTA exist, but oftentimes commercial use of such datasets is prohibited or the elements or objects to detect do not entirely match the actual use case.

The tool that we are going to use for creating the rotated bounding box annotations for a custom dataset is called labelme. Labelme specifically supports labeling objects using polygons, which can easily be used to extract the smallest-area rotated bounding box.

Install labelme

Labelme offers various options for installing the labeling GUI, please refer to the instructions here: https://github.com/wkentaro/labelme

Creating polygons

Start labelme with labelme --autosave --nodata --keep-prev . The GUI allows you to select the images to label one-by-one or based on a directory. It is highly recommended to place the images to label in a single directory since a json file with the labels will be produced in the same directory as the file. Having all the images and labels in the same directory makes things a whole lot easier when it comes to training the actual model.

The --autosave flag enables auto-saving so there is no need to doctrl+s on every image. The --nodata flag skips saving the actual image data in the json file that is produced for every image. Using --keep-prev can be considered optional, but it is very useful if the images are for example consecutive frames from a video since the option copies the labels from the previously labeled image to the current image.

Installing Detectron2

Before we can visualize the rotated bounding box annotations and train the model, we need to install Detectron2. Warning: this step may cause headaches.

Install PyTorch, OpenCV, and Detectron2

Before installing Detectron2, we need to have PyTorch installed. This means we can’t provide a clean requirements.txt file with all the dependencies as there is no way to tell pip in which order to install the listed packages. Detectron2 also does not include the dependency in their install requirements for compatibility reasons.

Depending on whether you want to use a CPU or a GPU (if available) with Detectron2, install the proper version from https://pytorch.org/. The Detectron2 installation documentation also offers some background and debug steps if there are issues.

Test the installation and visualize the dataset

I have provided an example of visualizing the annotated dataset in my blog GitHub repo. To test the installation and display the rotated bounding boxes, install necessary dependencies with pip install -r requirements.txt in this directory.

The polygons are used to determine the rotated bounding boxes. (Image Source: Erik Mclean from Pexels)

To verify that the annotations are correct, you can run the visualization script with python visualize_dataset.py <path-to-dataset> to visualize the annotations. The example dataset for the ships is available here (please email me for access). As you can see the polygons are correctly turned into rotated bounding boxes.

Training and evaluating the model

Predictions from the trained model. (Image Source: Erik Mclean from Pexels)

To run the training, run python train_rotated_bbox.py <path-to-dataset> --num-gpus <gpus>(the script is available here). The script and the rotated_bbox_config.yaml file contain various ways to configure the training, see the files for details. By default, the final and intermediate weights of the model are saved in the current working directory (model_*.pth).

To visualize predictions from the trained model, run python visualize_predictions <path-to-dataset> --weights <path-to-pth-model>.

Summary

Detecting rotated bounding boxes is a natural extension to detecting axis-aligned bounding boxes. Unfortunately, there aren’t many great examples available how to achieve this. I hope this tutorial and the provided example are helpful in achieving rotated bounding box detection!

Thank you for reading! Feel free to give feedback and improvement ideas!

You can find me on LinkedIn, Twitter, and Github. Also check my personal website/blog for topics covering ML and web/mobile apps!